World models are giving AI a body. Engineering R&D will never be the same.

Fri April, 2026

9 Mins read

Founder's Desk

Physics & Cross-Domain Discovery

Physics AI

Upstream R&D Intelligence

The $50M Trust Problem: Why Fortune 500 Companies Won’t Bet on AI Predictions

7 Mins read

Unlocking the Power of Circular Design: Embrace a World of Sustainable Possibilities

4 Mins read

We spent thirty years teaching AI to read. Then to write. Now we’re teaching it to understand how the physical world actually works — and the implications for deep-tech R&D are more profound than anything that came before.

A robot cannot learn to walk by reading about walking. It needs to fall. Repeatedly. Expensively. On real hardware that breaks, in real environments that surprise it, with real consequences every time something goes wrong.

That has been the fundamental bottleneck of Physical AI — teaching machines to act in the physical world requires the physical world to be present during training. Until now.

World models change this equation entirely. Not incrementally. Structurally.

“Physical AI needs to be trained digitally first. It needs a digital twin of itself — the policy model — and a digital twin of the world — the world model.”

This single sentence from NVIDIA’s Cosmos paper contains more strategic implication than most technology roadmaps written in the last decade. Let me explain why — and why it matters specifically to every engineering organisation running deep-tech R&D programmes.

What world models actually are

Forget the jargon for a moment. A world model is an AI that has learned how the physical world behaves — how objects move, how forces interact, how materials respond, how systems fail. Not by reading descriptions of physics. By consuming millions of hours of real-world video, sensor data, and physical interaction — and building an internal model of what comes next.

Large language models learned that “Paris” tends to follow “capital of France.” World models learn that a thermal runaway in a lithium cell tends to follow a specific pattern of heat accumulation at the electrode boundary. The learning mechanism is similar. The substrate is completely different. One models language. The other models physical reality.

20M

Hours of real-world data used to train NVIDIA Cosmos models9,000T

Tokens processed — comparable to scale of leading LLMs$50T

Manufacturing and logistics industries Jensen Huang says Physical AI will transform

NVIDIA launched Cosmos at CES 2025 and has moved with unusual speed since — Cosmos 2.5, Cosmos Reason, and by March 2026, Cosmos 3: the first world foundation model unifying synthetic world generation, vision reasoning, and action simulation in a single architecture. The pace of iteration signals something important: this is not a research project. It is a platform investment at infrastructure scale.

Arm’s 2026 predictions stated it plainly: “World models will emerge as a foundational tool for building and validating physical AI systems — from robotics and autonomous machines to molecular discovery engines.”

Note those last three words. Molecular discovery engines. This is not a robotics story. This is an R&D story.

The three things world models unlock that nothing else could

1 Training without consequence. An AI can fail ten thousand times inside a world model before touching real hardware. For engineering R&D this means: a concept can be tested in a physics-accurate simulation environment before a single physical prototype is built. The cost of exploration collapses from millions to thousands.

2 Generating the rare and dangerous. Real-world testing cannot surface every failure mode — thermal runaway at unusual duty cycles, fatigue failure under edge-case loading, resonance behaviour in novel geometries. World models generate synthetic scenarios that physical test programmes would never reach. Every gap in physical test coverage can be closed digitally.

Why this is not just a robotics story

The immediate applications of world models — autonomous vehicles, industrial robots, warehouse automation — are highly visible. Jensen Huang announcing partnerships with ABB, KUKA, FANUC, and Medtronic at GTC 2026 made clear that robotics is the first wave.

But the underlying capability — an AI that reasons about physical reality, predicts physical outcomes, and learns from physical feedback — is not limited to systems that move. It applies to any engineering problem where the gap between what the model predicts and what the physical world produces is the critical constraint.

Battery thermal architecture. Hybrid propulsion system design. Semiconductor packaging under thermal and mechanical stress. Structural integrity of next-generation aerospace components. In every one of these domains, the fundamental problem is identical: we need to know whether a concept will work in physical reality before committing the resources to build it.

World models are the mechanism by which that question gets answered — not in months, not in millions of dollars of prototype testing, but in hours, digitally, before any metal is cut.

“The next frontier of AI is not language. It is physics. Not the equations in textbooks — the physics that actually governs how things break, warp, heat, and fail in the real world.”

The industrial AI stack is being redrawn — right now

Siemens and NVIDIA announced their Industrial AI Operating System partnership at CES 2026 — with Siemens’ Digital Twin Composer launching mid-2026 on the Xcelerator Marketplace. Dassault Systèmes and NVIDIA announced a long-term strategic partnership in February 2026 to build science-validated Industry World Models. Both announced at 3DEXPERIENCE World 2026 with Jensen Huang on stage.

The world’s two largest industrial software companies are racing to integrate world model infrastructure into their platforms simultaneously. The reason is straightforward: whoever owns the world model layer owns the data flywheel. Every simulation run, every physical test result, every divergence between prediction and reality — all of it becomes training data for a world model that gets smarter with every programme.

This is the same compounding dynamic that made LLMs so powerful. Except instead of language, it is engineering knowledge. And unlike language — which is abundant on the internet — engineering physics data from real programmes is scarce, proprietary, and extraordinarily valuable.

The missing layer nobody is talking about

Here is what the Siemens and Dassault announcements do not address, despite the scale of the investment behind them.

World models are exceptional at simulating concepts that already exist. They can validate a geometry. They can predict how a design performs under physical load. They can generate synthetic failure scenarios for a known architecture.

What they cannot do — what no world model in production today can do — is decide which concept is worth building in the first place.

The upstream R&D decision — which direction to explore, which governing physics to harness, which cross-domain insight from aerospace or biomedical or nuclear engineering applies to this battery thermal problem — remains entirely outside the world model’s scope. World models are extraordinary validators. They are not innovators. They cannot traverse 42 industries by physics equation and surface a solution from satellite propulsion cooling as the answer to an EV battery challenge.

That upstream intelligence layer — the one that decides what to put into the world model for validation — is the gap the industry has not closed. It is the layer that sits before the digital twin, before the surrogate, before the first simulation run.

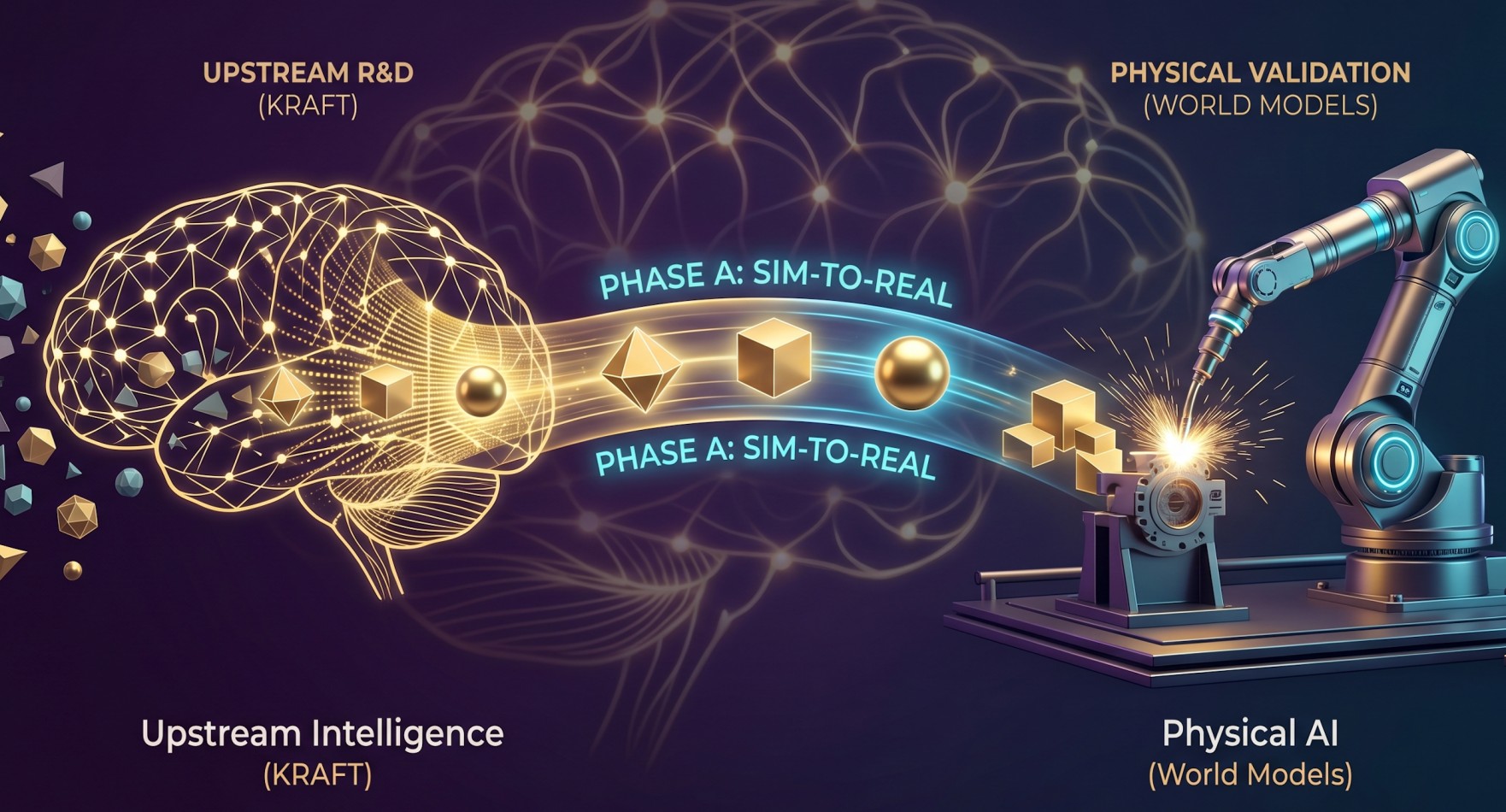

KRAFT operates at the moment before a concept exists — searching 42 industries by governing physics equation, generating 2,500 variants via Latin Hypercube Sampling, validating against PhysicsNeMo surrogate models, and surfacing the top 3–5 physics-validated concepts with a full IP provenance chain. In Phase A, those concepts auto-instantiate as digital twins in NVIDIA Omniverse. In Phase B, physical test bench data flows back to calibrate the surrogates — on the OEM’s own infrastructure, never leaving their data governance boundary. KRAFT is the upstream intelligence layer. World models are the downstream validation infrastructure. They are not competitors. They are sequential.

What this means for engineering R&D leaders

If you run a Fortune 500 engineering organisation — automotive, aerospace, energy, semiconductor — three things are now true that were not true three years ago.

First, the cost of physical exploration is about to collapse. World models trained on your programme history will eventually allow your team to validate concepts digitally that previously required physical prototypes. The organisations building that programme history now will have a compounding advantage in 2028 that latecomers cannot easily acquire.

Second, your simulation team’s role is shifting. They will spend less time running exploratory simulations on concepts of uncertain quality and more time running confirmatory simulations on concepts that have already survived physics-based upstream validation. That is a better use of your most expensive computational resource.

Third — and most importantly — the upstream decision remains the highest-leverage point in your R&D process. World models do not change this. They make the consequences of a good upstream decision more valuable and the consequences of a bad one more expensive. Choosing the wrong direction to simulate, at scale, in a world model, is a faster and more expensive version of choosing the wrong direction to prototype.

The intelligence that decides which direction is worth exploring — before the simulation, before the world model, before the prototype — is what determines whether the world model investment compounds or burns.

The next two years

World models are moving from research to production faster than most infrastructure technologies. Cosmos 3 launched in March 2026. Siemens’ Digital Twin Composer ships mid-2026. Dassault’s Industry World Models are in active development with NVIDIA infrastructure. The ICLR 2026 World Models workshop documented the field moving from loose concept to scalable engineering tool in under two years.

The organisations that will benefit most are not the ones that deploy world models earliest. They are the ones that have the best upstream decisions flowing into their world models — the clearest problem definitions, the most physics-validated concepts, the deepest cross-domain intelligence.

Because a world model trained on bad inputs produces confident wrong answers faster. And in deep-tech R&D, confident wrong answers at speed are more expensive than slow uncertainty.

The missing layer — upstream R&D intelligence — is not a nice-to-have before the world model era. It is a prerequisite for it.

Sridhar DP is Founder & CEO of KREAT Inc., building KRAFT — a Scientific AI platform for upstream R&D intelligence. KRAFT is an NVIDIA Inception Programme member with a PCT patent filed.

Learn more at kreat.ai/kraft · Connect with Sridhar on LinkedIn

Human brain, AI pen. Strategic insights by Sridhar, wordsmithing by Claude AI.

Popular Post

Tue March, 2026

1 Mins read

I tested GPT-4, Claude, and Gemini on the same battery design challenge. All three violated the second law of thermodynamics.… See more

Sridhar DP

Founder's Desk

Wed January, 2026

7 Mins read

The $50M Trust Problem: Why Fortune 500 Companies Won't Bet on AI Predictions Every VP of Engineering knows the prototype… See more

Tue August, 2025

1 Mins read

Collection series - 2021 Want more innovation? Sridhar, Founder & Chief Integrator of Innovation Enabler, discusses creativity and innovation. He… See more

Sridhar DP

Founder's Desk

Shaping the Future with Heartificial Intelligence: My Vision from the 2018 Innovation 4.0 Conference

Read

Mon May, 2025

2 Mins read

Originally written in sris.blog In 2018, alongside my colleague Dr. Kiran BS, I presented a visionary concept at the prestigious… See more

Quantum leaps - : Latest innovation breakthroughs and stratagies , delivered to your inbox .

Kreat.ai uses cookies to offer you a better experience. See Cookie Notice for details

Kreat.ai uses cookies to offer you a better experience. See Cookie Notice for details.